How to get great ITAM data from Active Directory

This article presents a guide to Active Directory for IT Asset Managers – what it is, what it’s used for, and how you can use it to improve ITAM data quality and reduce audit risk.

What is Active Directory?

What is Active Directory?

Active Directory (AD) is the “address book” for virtually all corporate IT networks. It contains user accounts, computer accounts, corporate hierarchies, policies, and groups. It is both the reference library for information about a particular computer network or group of networks and also the primary tool for their management.

What’s it used for?

Pretty much everything to do with managing IT! At the most basic level it stores a record of a user’s membership of a Windows domain. Username, email address, password, group membership, last logon date, first logon date, last password change date and much more. It does the same for each computer that’s joined to the domain. It contains policy settings for managing those computers and users, and it contains groups used for everything from email distribution lists to controlling access to applications. It’s the golden record of truth about your IT estate. Well, at least, that’s the theory – but as anyone who has looked at the contents of an Active Directory will find it’s often out of date and poorly maintained. More on that later.

Who manages it?

Usually, AD is maintained by IT Operations – most often your Windows Server or Network teams. These Domain Administrators hold the “keys to the kingdom” and tend to protect them vigorously. It’s very easy to do widespread damage if you don’t know what you’re doing when it comes to managing AD and so such “admin” roles are highly restricted. I’ve seen first-hand the damage caused by a misconfiguration in AD, which resulted in two weeks of widespread disruption to the business I was working for. Following the NotPetya attack in 2018 Maersk dispatched an engineer to a branch office in Ghana which contained the sole surviving pre-attack copy of their entire AD – and therefore the blueprint for their entire IT estate. Without that copy they would have been unable to recover anything of their pre-attack IT infrastructure.

What doesn’t it contain?

Usually, it won’t contain non-Windows computers, servers, and users. Management of these entities – often Apple Mac and *nix devices (Linux, Unix etc.) – is limited, as is information about them. It also is unlikely to contain information regarding cloud services unless it has been integrated with your cloud provider.

What is the importance of Active Directory to IT Asset Managers?

Active Directory is a Discovery and Inventory source for ITAM. If you don’t have a dedicated ITAM tool it is likely to be the definitive source of truth for the estate you’re trying to manage.

Why should IT Asset Managers care what it contains?

An out-of-date Active Directory is a source of financial, data, and license audit risk.

Audit Risk

Typically, during a license audit, auditors will ask for user and computer counts from your Active Directory. They may also ask for evidence of group membership, particularly for well-established audit risk scenarios such as VDI, Citrix, and non-production use. If your AD is not well maintained, it’s likely that users that have left and computers that are no longer in use are still listed. Whilst you can argue that AD isn’t a true reflection of consumption of licenses it can still be used as evidence in an audit. For example, it may provide evidence of unlicensed use that’s been remediated prior to the audit.

Financial Risk

Financial risk arises from AD often being used to control access to applications. If groups aren’t kept up to date you are effectively assigning a license to a user who no longer requires it. This is particularly the case when groups are used to manage access to VDI, Citrix, or non-production environments.

Data Risk

Failure to maintain AD groups and user accounts also presents a data risk. If you don’t remove users when they leave or remove access to data they no longer require because they’ve moved departments or changed roles, then you’re at risk of breaching data processing and privacy laws.

Positives

There are also positives to IT Asset Managers paying attention to Active Directory. The biggest is that it enables you to reconcile and verify data gathered by your ITAM tools. For example, if your ITAM tool discovers 4500 active devices but AD reports 5000 with active logins you know you’ve got an agent deployment or other discovery problem. This also applies to user accounts.

Furthermore, if group membership is accurate it becomes possible to track and secure access to non-production environments, or VDI & Citrix environments. This is incredibly useful for proving under audit conditions that only authorised dev/test users have accessed those environments.

Improving AD Accuracy

Label on Tim Berners Lee’s first WWW server (credit Robert Scoble)

As noted above, Active Directory is often poorly maintained. Old accounts aren’t deleted, groups aren’t tidied up, and computer accounts aren’t removed when they’re no longer needed. Your AD admins are often too busy with day-to-day tasks to give a “spring clean” of AD the attention and effort that is needed. In my experience they need to be pushed to do clean-ups, with regulatory requirements such as Privileged Access Reviews for SOX being a catalyst. Because AD is so powerful, and the consequences of doing something wrong so catastrophic, there is also a reluctance to carry out widespread changes. Domain Controllers, the beating heart of AD, are labelled with virtual and sometimes actual “Do Not Touch!!” labels.

The Solution

Before we go any further it’s important to note that ITAM teams are not the Data Owner for Active Directory. The quality and availability of that data is the responsibility of the owners of AD. You are not on the hook for it, but you are a stakeholder and that does mean we have the opportunity to help and encourage the owners of AD to clean up their directory. How do we do that? A good starting point is to use free tools to estimate the scale of any problems.

Tools

At its heart, AD is an LDAP directory. LDAP (Lightweight Directory Access Protocol) is an open IETF Standard that’s been widely adopted for maintaining and providing access to directory information. The beauty of this is that data stored in AD is easily accessible via a variety of tools and can also be integrated easily with other applications. Many ITAM tools provide the option for importing AD information as a source of discovery and inventory information.

If you want to dig deeper you can do so and, importantly, you don’t need any special credentials or access privileges. The entire directory is open and readable to anyone with a user account. Readable means readable – meaning that unless you’re for some reason a user with elevated privileges – all you can do is read and report on data, you can’t modify anything. This is important and should immediately head off any concerns your AD domain admins may have about giving you access. With a read-only account you simply can’t do any damage.

Our recommended tool for querying AD directories and domains is Softerra LDAP Browser (free) although there are many others available.

How do I connect to my Active Directory?

The first step is to find your Domain Controller. This is the computer that logged you on when you entered your username and password on your Windows machine. To do this;

- Open a Command Prompt on your Windows client

- Type echo %logonserver%

Once you have the logon server hostname (e.g. DC01.acmecorp.com) you can configure your LDAP browser tool to connect to it.

- Create a new profile specifying the server FQDN you found (e.g. dc01.acmecorp.com)

- Under the Authentication options use the “Currently Logged On User” option

- Select the RootDSE option (N.B. this will return a lot of results, as you become more proficient with querying LDAP servers you will likely change this to be more focused)

That’s it – your profile is configured and now you can browse the directory structure using the folder tree on the left-hand side of the screen. AD is highly configurable but out of the box you should find Users, Computers, and Groups hierarchies – these are the ones you’ll query most as an ITAM manager.

For a beginners guide to using Softerra LDAP Browser to query directory information see https://www.ldapadministrator.com/resources/english/help/la20201/index.php

Useful AD information for ITAM Managers

You are primarily concerned with Users, Computers, and Groups. By querying these objects you can find out:

1. The last time a user logged on to the network

For our purposes the AD attribute LastLogonDate is sufficient to determine this. The primary use of this attribute is to identify stale accounts – if a user hasn’t logged on for 90 days, are they still an active user? Have they left the company? Or perhaps they’re furloughed or on parental leave? We can certainly use this to identify users who can potentially be removed, which in turn will free up licenses and subscriptions. It’s worthwhile running this check at least monthly, and certainly in the run up to any renewals using a user-based metric.

2. The last time a computer logged on to the network

This provides the same information for computers rather than users. Useful for identifying which computers may be dormant or off the network. If you have an ITAM tool you can use this report to identify licenses that can be harvested and reassigned. For HAM processes this date is useful for pinpointing whether a device has been consigned to a cupboard or someone’s desk drawer.

3. Which user is in a particular group, and usually what that group is used for

This is dependent on how your Windows team have configured Active Directory. Best practice is to use groups in AD to control access to network resources, servers, and applications. If your Windows team are doing this you can identify users listed as having access to a particular application and compare that with their last login time to see if a license can be reassigned or harvested. Similarly, you can check which users are accessing non-production environments, and potentially exclude software installations from compliance calculations, if the license agreement has different rules for non-production usage.

Generating Reports

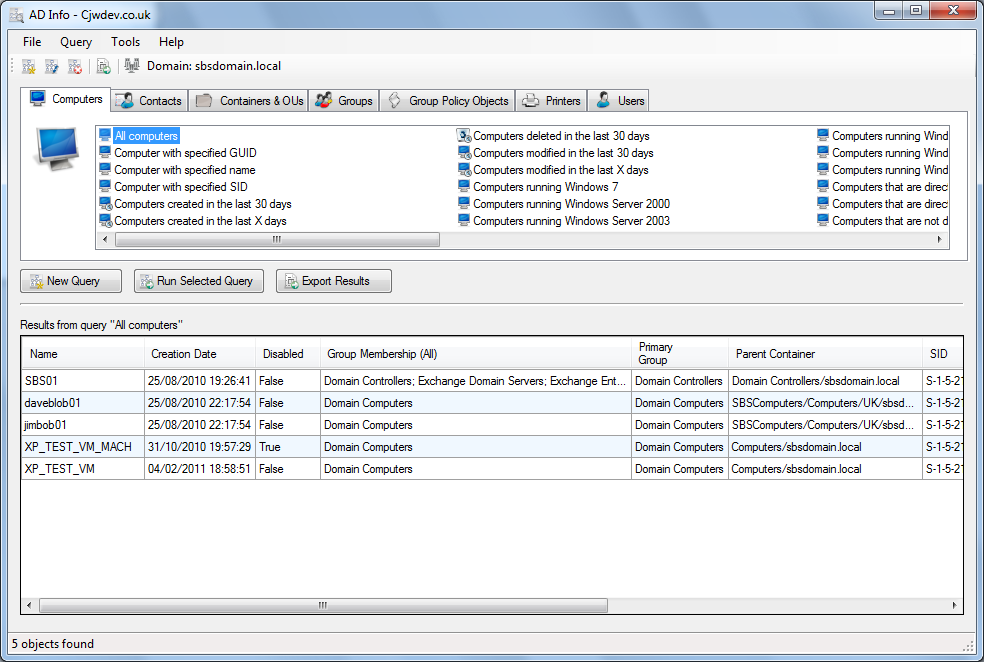

When I was exploring the usefulness of AD for ITAM I homed in on two things – finding the last logon/used date of Users and Computers and identifying potential discovery blind spots of my ITAM tool’s agents. I found the quickest way to generate reports was to use other free utilities – the two I used most frequently were AD Info from CJWDev and the command-line utility OldCmp from JoeWare.

These are both powerful reporting utilities. Of the two, AD Info is the easiest to use with a graphical interface, but OldCmp is very fast and ideal for getting data to use in other reports – for example importing into a spreadsheet. Both are freeware utilities from the “old school” – no warranty, no support, use with discretion – but remember, as long as your user account only has read-only access to AD you can’t do any damage.

Using OldCmp

To wrap up this article I’m going to share the two commands you can use in OldCmp to generate a complete dump of user and computer info from an AD domain in a CSV file, which can then be used for further analysis in Excel:

First, download OldCmp from JoeWare and place it in an easily accessible directory (e.g. C:\oldcmp)

Open a command prompt and enter the following commands according to whether you want to get a users or computers report.

Users: oldcmp -report -users -format csv -age 0

Computers: oldcmp -report -format csv -age 0

This is particularly useful for identifying computers/users which are active but not yet discovered by your ITAM tool.

For the above commands, if you wish to only retrieve a list of users/computers that haven’t been used for 90 days just drop the “-age 0” from the end of the command.

There’s a comprehensive list of commands in OldCmp available here – useful things like identifying disabled users and computers and so on.

The reason I used OldCmp for reporting is that it generates two additional report fields from AD:

pwage – how old in days the password is (i.e. when was it last changed)

lltsage – Last Login Timestamp Age – how long ago the last successful logon for that user or computer was.

This parsing of otherwise complex AD fields (pwdLastSet & LastLogonTimestamp) means you can easily build, for example, Excel pivot tables showing which users and accounts are potentially stale and should be inactivated/disabled/deleted.

A note on PowerShell

PowerShell is Microsoft’s comprehensive utility for managing a Windows estate. It’s incredibly powerful, quite often you need to have special permissions to run it, and as such I’ve considered it to be out of scope for this article. My view is that the above utilities are safer for a non-administrator to go digging around in AD data.

Conclusion

Active Directory is a valuable source of information for your ITAM team. You can use it reduce costs and manage risks. By running your own reports, you can get a sense of the scale of completeness of your ITAM tool, and also how tidy your AD is. You can use this information to shine a light on the blind spots your ITAM tool isn’t seeing, and to engage with your AD administrators on improving the quality of data in AD. A closing note on this: your AD admins are busy, anything you can do to help them will be appreciated, but make sure you approach them in the right way. As with any stakeholder engagement the right way is dependent on your organisational culture. Get it right and you’ll cut costs, reduce risks, and have built a strong relationship with a key stakeholder. Remember, though, that they are the Data Owner for AD and ultimately it’s their responsibility to ensure that their data is accurate and complete.

- Tags: Active Directory · AD · discovery · finding leavers · inventory · offboarding · trustworthy data · trustworthy inventory

AJ, this is a great primer, but it doesn’t reference the role AD can play in software optimisation in a mature ITSM estate. If you have msi packages / s/w removal scripts that are run when a user or machine is added to an AD group, then AD becomes a powerful way to optimise software deployments. If you have single sign on it can also be a way to help you understand exactly what is being used and by whom (beyond what is discoverable by a SAM tool) which also drives some really powerful optimisation opportunities.

It is only relatively mature companies that use AD in this way, however many companies shifted to this approach to help with Win10 rollouts, so they are already part way there. An article on how to use AD and single sign-on for s/w optimisation would also be really helpful.

Thanks Kylie – will get to work on that! In my last corporate role we’d just started using AD groups in that way – mainly to control access to non-prod environments which were licensed by MSDN. And SSO was on the way in order to improve SOX control performance.

Further to Kylie’s comment… My understanding is that ‘permissions’ for software deployments are in AD, and would ‘count’ in a compliance audit, whether or not the software had been deployed under that permission.

Thanks Sherry – my take on it – and this was from using groups to manage MSDN subs – was that record-keeping was key. We had a policy and a control that stated you could only access those non-prod environments via membership of an AD group. For audit purposes we regularly audited access to those systems and tracked changes to the relevant AD groups, with the idea being that that would provide good proof in the event of an MS audit.

I’m sure we all remember Microsoft’s view on license consumption for Citrix deployments – namely if it’s on a server, everyone with access to that server must have a license assigned. In the time before we had SAM in place that led to us virtually abandoning thin client access to non-standard apps and getting off Citrix as a platform. Had I stayed with that organisation my next project was to develop the necessary controls to enable thin client computing, which would have been based on AD groups, controls, and record-keeping.

In my experience it’s very difficult to get access to Active Directory data due to the lack of controls surrounding maintenance of the information it holds. I agree it’s a valuable data source for ITAM Professionals to compare against deployed toolsets to check the % of coverage across the IT Estate and look at what actions can be taken to remediate any exceptions and optimise ROI.

I would love the opportunity to test the tools you have recommended here and see the results once the data is analysed to help get a clearer picture of what we think we have v what we actually have. I shall be sharing your article with my colleagues to open a discussion on if use of the free tools would be permissible in the organisation I work for.

Thank you for sharing this with us all.

That’s good to hear Den1se – please let us know how it goes. I think the main point thing about gaining access is that you do only need Read access, and therefore can’t do any damage. Experience tells me that this is the way to go – you absolutely don’t want read/write access! That way, it can’t be your fault if something goes wrong 🙂